Epoch's work is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons BY license.

Learn more about this graph

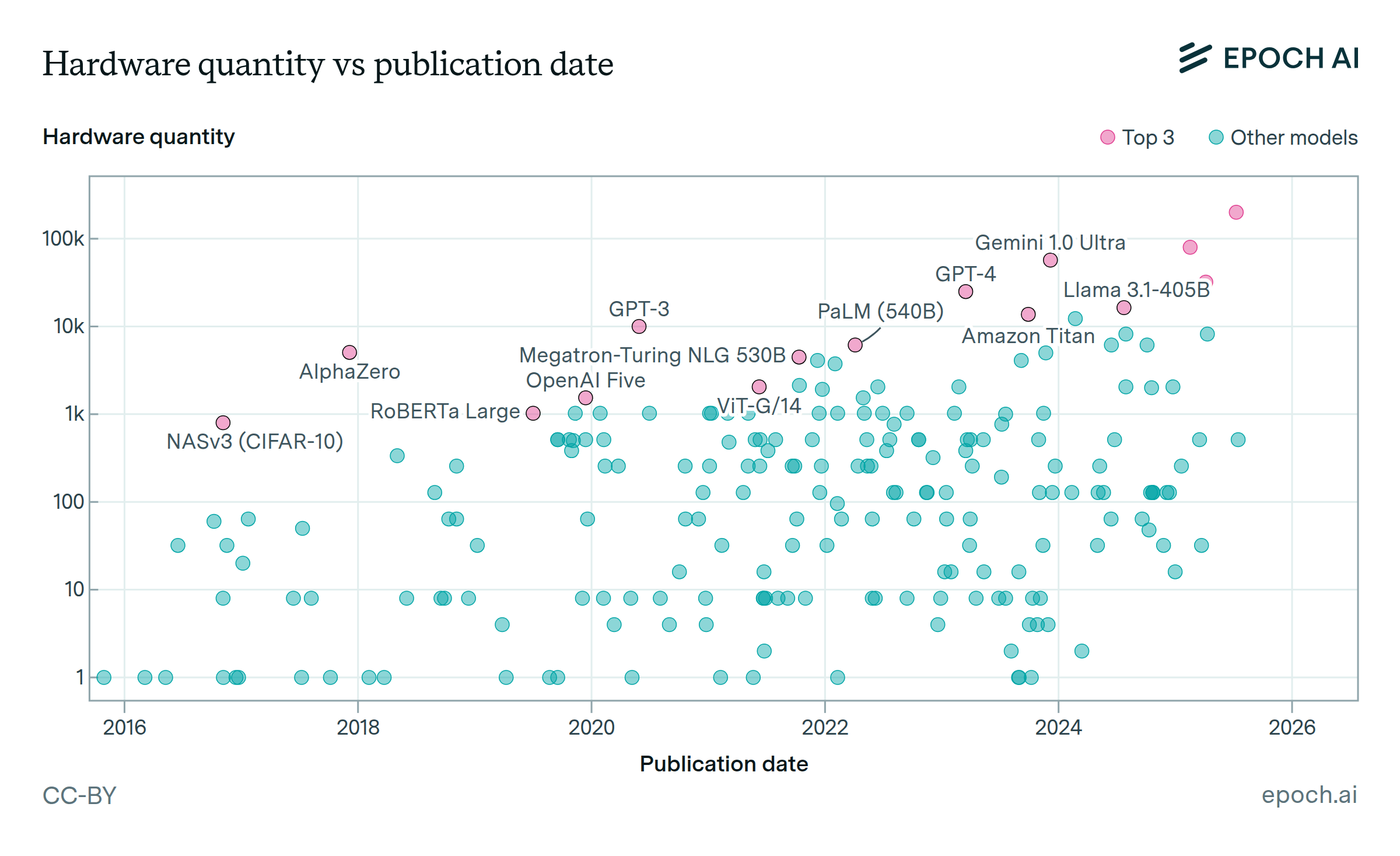

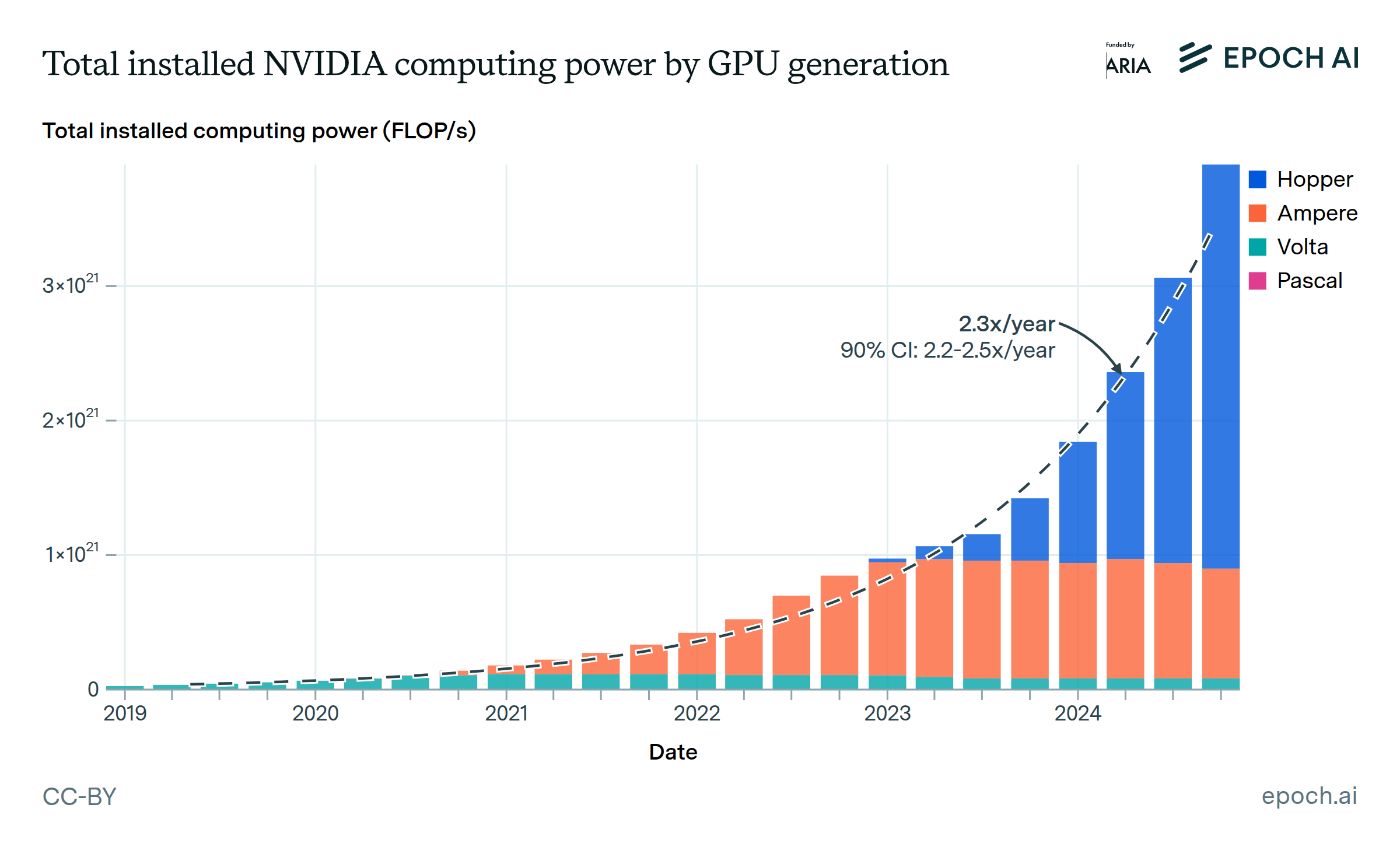

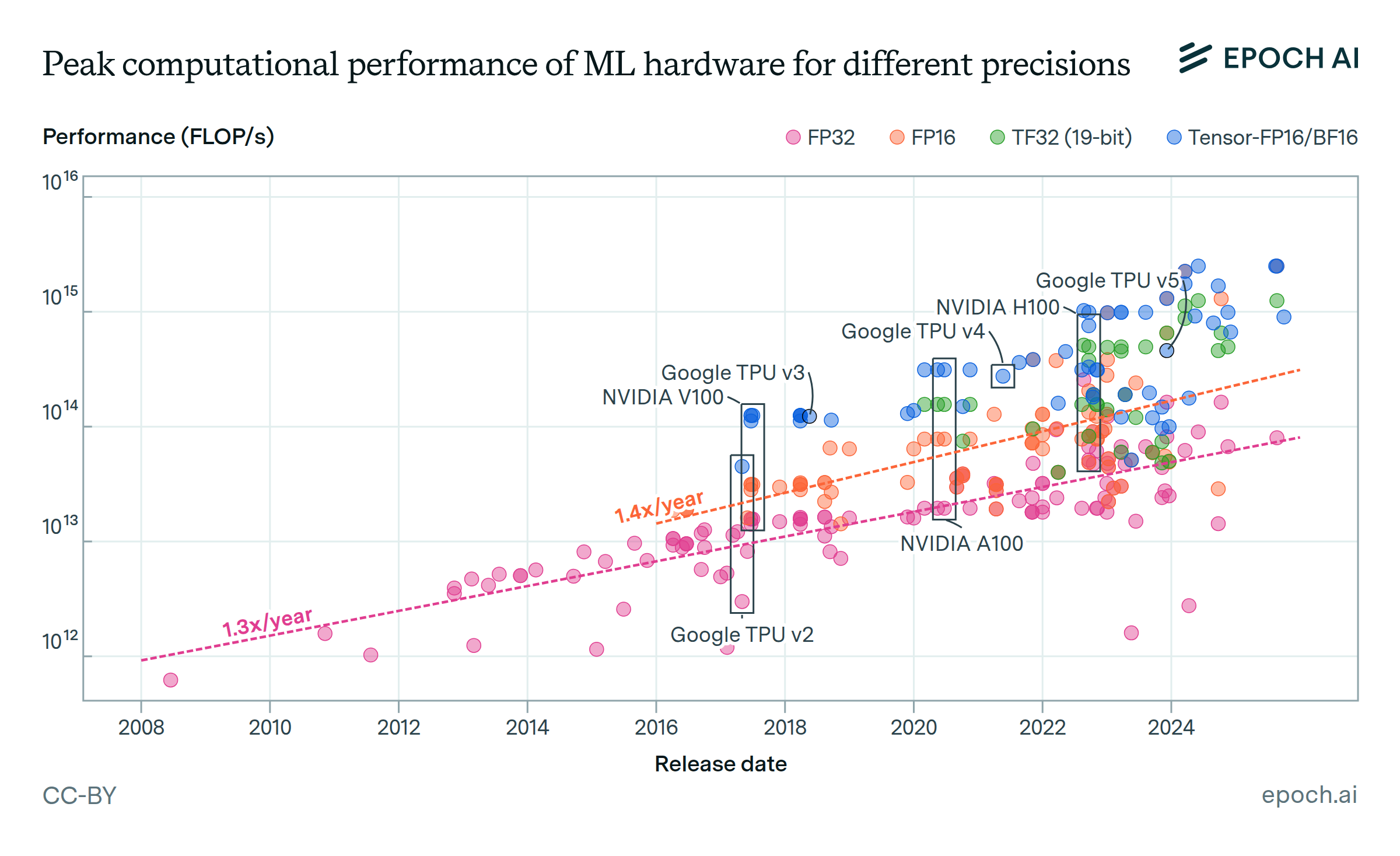

Data come from our AI Supercomputers dataset, which collects information on 728 supercomputers with dedicated AI accelerators, spanning from 2010 to the present. We estimate that these supercomputers represent approximately 10-20% (by performance) of all AI chips produced before 2025. We focus on the 502 AI supercomputers which became operational in 2019 or later, since these are most relevant to modern AI training.

When cost figures are not explicitly reported, we estimate the cost of a datacenter by first estimating the hardware costs from chip quantities and public pricing information. We then factor in the expected cost of additional hardware such as networking switches and CPUs. Notably, we do not include the cost of power generation or data center construction. All cost figures are inflation-adjusted to January 2025 dollars.

For more information about the data, see Pilz et. al., which describes the supercomputers dataset and analyzes key trends, and the dataset documentation.

Analysis

Assumptions and limitations

Explore this data

Over 500 GPU clusters and supercomputers, including those used for AI training and inference.