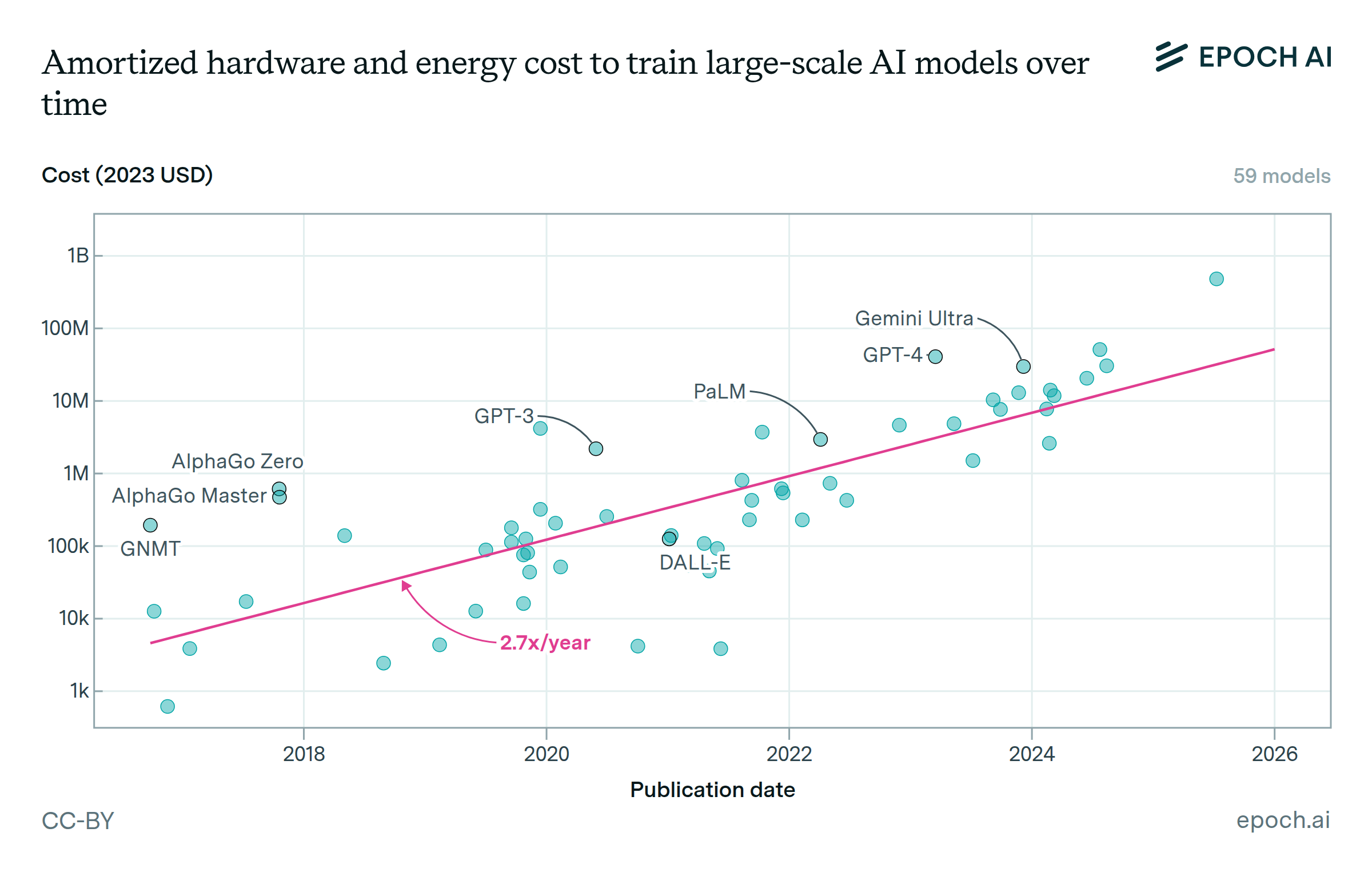

OpenAI’s overall compute expenses

Data for OpenAI’s overall compute spending in 2024 come from Epoch’s AI Companies dataset, ultimately via reports in The Information and The New York Times. These reports indicate that OpenAI spent $3 billion on training compute, $1.8 billion on inference compute, and $1 billion on research compute amortized over “multiple years”. For the purpose of this visualization, we estimate that the amortization schedule for research compute was two years, for $2 billion in research compute expenses incurred in 2024. These are OpenAI’s cloud compute expenses in 2024, not the upfront capital cost of the data centers: OpenAI currently relies on cloud companies to access compute.

How exactly OpenAI distinguishes training and research compute is not known, so we group these expenses as $5 billion in total “R&D” (research and development) compute expenses.

Final training cost of released OpenAI models

For the training expenses of OpenAI models, we looked at OpenAI’s released or announced models from the end of Q1 2024 through the end of Q1 2025, as a rough heuristic to account for the delay between when a model was trained and its release/announcement date. Significant models from this time period include GPT-4.5, GPT-4o, o3 (December preview), and Sora Turbo.

We estimated the cost of the final training runs for these models, meaning the training runs that directly produced the released versions, as described below The code and full methodology can be found in this notebook.

GPT-4.5: We directly estimate GPT-4.5 cloud spending using estimates of GPT-4.5’s cluster size, duration, and price per GPU-hour, because we have more evidence about GPT-4.5 training cluster and duration than its training compute in terms of FLOP.

The training details we used were as follows:

| Low estimate (10th percentile) | High estimate (90th percentile) | Notes |

|---|

| Cluster size, in Nvidia H100s | 40,000 | 100,000 | Microsoft’s Goodyear Arizona cluster, including Epoch’s analysis of satellite imagery (methodology forthcoming) and a third-party estimate. |

| Training run duration in days | 90 | 165 | Likely began in May, ended in September. |

| Cloud cost per H100-hour | $1.50 | $3 | Matches general industry trend of $2 per H100-hour |

Other models: We use a simpler approach of estimating their training compute in floating-point operations (FLOP), and then calculating cloud compute cost using standardized assumptions about GPU utilization and the cost of GPU-hours. We focus on confidence intervals of FLOP rather than point estimates in order to estimate the aggregate compute of OpenAI models. The confidence intervals we used are shown below:

| Model | Low estimate (10th percentile) | High estimate (90th percentile) |

|---|

| GPT-4o | 1e25 FLOP | 5e25 FLOP |

| GPT-4o mini | 1e24 FLOP | 1e25 FLOP |

| Sora Turbo | 1e24 FLOP | 1e26 FLOP |

| o-series (o1-preview, o1, o1-mini, o3-mini, o3-preview December) | 1% of base model compute, presumed to be GPT-4o and GPT-4o mini) | 30% of base model compute, presumed to be GPT-4o and GPT-4o mini) |

| Other models (primarily updates of GPT-4o, such as GPT-4o image gen, GPT-4o CUA, and SearchGPT) | 1% of GPT-4o’s base model compute | 100% of GPT-4o’s base model compute |