Epoch's work is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons BY license.

Learn more about this graph

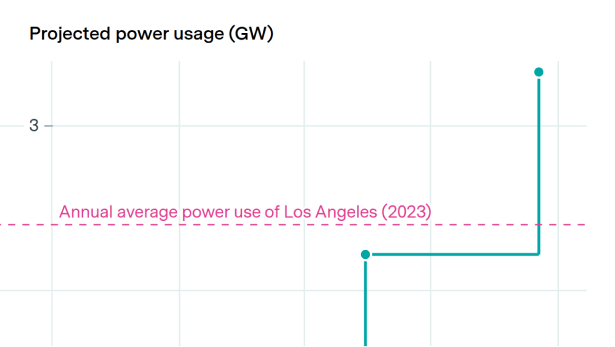

We estimate the fraction of power within frontier AI datacenters attributable to several nested categories of power use:

- GPUs

- Servers (GPUs plus CPUs, interconnect, storage, etc. within a server)

- All IT equipment (all of the above plus interserver switches, management nodes, etc.)

- Total facility power (accounts for extra power usage from things like lighting, cooling, and power inefficiencies)

All calculations are based on a data center at peak operation.

Data

Limitations

Explore this data

Open database of AI data centers using satellite and permit data to show compute, power use, and construction timelines.