Frontier AI chips depend on three key inputs: advanced logic dies, high-bandwidth memory (HBM), and CoWoS advanced packaging (which integrates these components together). We estimate that the four largest AI chip designers collectively consumed around 90% of global CoWoS capacity and HBM supply in 2025, while consuming only 12% of advanced logic die production.

With AI chips absorbing nearly all available CoWoS and HBM supply in 2025, expanding production requires building new manufacturing facilities with long lead times. Logic fabrication was a softer constraint - the large majority of advanced-node capacity serves other markets, and AI’s share could grow by outbidding competing demand rather than waiting for new fabs. However, today’s newest AI chips increasingly use the 3nm process node, where they will likely take up a much larger share of production and could run into capacity constraints.

Epoch's work is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons BY license.

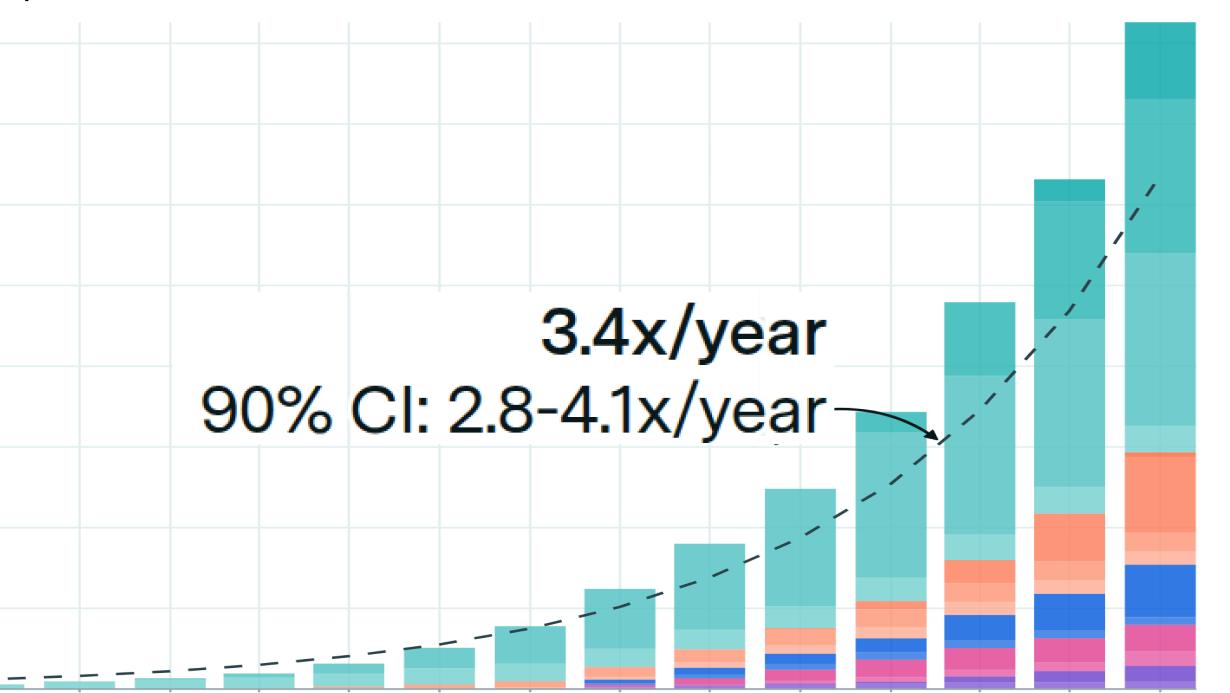

Learn more about this graph

Frontier AI chips like NVIDIA’s H100 and B200 require three critical supply chain inputs: advanced logic dies fabricated at leading-edge process nodes, high-bandwidth memory (HBM) stacks, and CoWoS (Chip-on-Wafer-on-Substrate) advanced packaging to integrate these components. While all three inputs are necessary, they face very different supply-demand dynamics. See our detailed methodology for full technical documentation.

We find that the four largest AI chip designers — NVIDIA, Google, AMD, and Amazon — collectively consumed over 90% of global CoWoS packaging capacity and HBM supply by value in 2025, but only about 12% of advanced logic die production. This asymmetry suggests that scaling AI chip production in 2025 was primarily bottlenecked by CoWoS and HBM manufacturing.

Data

We estimate what share of global advanced semiconductor supply each major AI chip designer consumed in 2025. We track three components: advanced logic dies (3–5nm wafers), HBM, and CoWoS packaging. We focus on 3–5nm process nodes because these are the nodes used by current frontier AI accelerators: NVIDIA’s Hopper and Blackwell GPUs use TSMC’s 4N/4NP processes, while Google’s TPUv6 and Amazon’s Trainium2 use 5nm. AMD’s MI350 and Google’s TPUv7 use 3nm. For each component, we estimate total global supply over the 2025 production window and compare that supply against demand from the four largest AI accelerator designers: NVIDIA, Google, AMD, and Amazon.

Manufacturing Lags: Each supply chain component is consumed at a different point in the chip manufacturing timeline. Logic dies are fabricated roughly 16 weeks before shipment; CoWoS packaging occurs roughly 8 weeks before shipment. HBM must be procured before CoWoS packaging begins, so it too is consumed at least 8 weeks before shipment. Rather than use a simple calendar-year window for supply, we shift each supply estimate to match when 2025-production chips were actually drawing on that component. All three lags are modeled as uncertain ranges and sampled in the Monte Carlo simulation.

We estimate supply and demand separately for each of the three supply chain components.

Advanced logic wafer supply: TSMC controls approximately 90% of global advanced-node fabrication, making its reported revenue a reliable proxy for total supply. We divide TSMC’s node-level revenue by estimated wafer prices to get TSMC wafer volumes, then scale up by TSMC’s market share to reach global supply. The 16-week lag shifts the relevant window to approximately September 2024 through August 2025, which we construct as 1/3 of 2024 production plus 2/3 of 2025 production. Our lag-adjusted estimate of global advanced-node wafer supply is approximately 3.2-3.5 million wafers.

HBM supply: We measure HBM in dollar value rather than GB and anchor to Micron’s publicly stated total addressable market estimates of $18B in 2024 and $35B in 2025. HBM supply is modeled as growing throughout the year rather than being distributed evenly, reflecting the strong demand ramp. The 8-week lag shifts the window to approximately November 2024 through November 2025, giving a lag-adjusted market size of approximately $31.6B.

CoWoS packaging supply: TSMC does not publicly disclose packaging capacity, so we rely on year-end wafers per month (WPM) benchmarks from SemiWiki and TrendForce: approximately 13,000–16,000 WPM at 2023-end, 35,000–40,000 WPM at 2024-end, and 65,000–75,000 WPM at 2025-end. We linearly interpolate between year-end anchors to estimate capacity for each month in 2024 and 2025, then calculate the 8-week lag-adjusted window. The lag-adjusted supply denominator is approximately 580,000-625,000 wafers.

Chip volumes across NVIDIA, Google, AMD, and Amazon are drawn from Epoch’s Chip Sales Hub, which estimates quarterly chip sales from financial filings, analyst reports, and primary research. For NVIDIA and AMD, we apply inventory adjustments using year-over-year changes in balance sheet inventory. NVIDIA and AMD sell chips to external customers and hold inventory in anticipation of demand — raw materials, work-in-progress, and finished goods all consume supply chain capacity before any chip is sold. To account for this, we add an inventory adjustment derived from year-over-year changes in each inventory category reported in each company’s 10-K. However, we don’t apply this adjustment to Google and Amazon as they are the end consumers of their own chips, they produce TPUs and Trainium primarily for internal deployment, so we assume chips flow directly into use without accumulating as finished goods inventory. Isolating AI accelerator inventory from their broader balance sheets, which include large, diverse businesses, also introduces enough uncertainty to make the adjustment unreliable. As a result, we likely slightly underestimate supply chain consumption attributable to Google and Amazon, since we do not account for chips currently in production or raw materials they may be holding.

After applying inventory adjustments, we translate chip volumes into logic wafer, CoWoS, and HBM consumption using technical specifications for each chip: die sizes, dies per wafer, logic and packaging yield rates, packages per CoWoS wafer, and HBM capacity in GB per chip.

Analysis

We derive supply share estimates for each designer by combining shipment volumes and inventory, translating unit volumes into supply chain consumption using per-chip technical specifications.

All uncertain parameters — including wafer prices, packaging and logic die yields, CoWoS capacity benchmarks, manufacturing lag durations, HBM price per GB, and the GPU-attributed share of NVIDIA and AMD inventory — are modeled as triangular distributions. These ranges are informed by analyst reports, company disclosures, and bill-of-materials estimates that can be found in our parameter sheet.

We use a Monte Carlo simulation, sampling each parameter independently from its distribution in each draw. For each draw, we compute the implied supply chain consumption for every chip and divide by the supply denominator to produce a share estimate. Final results are reported as the median with a 90% confidence interval.

Inventory dollars are converted to implied chip units using per-chip bill-of-materials costs. For finished goods, we use the full assembled cost per chip. For work-in-progress (WIP), we assume a 50/50 split between early-stage units (logic die only) and late-stage units (logic die, HBM, and CoWoS packaging), and divide WIP dollars by the blended average cost across both stages.

See our detailed methodology here.

Limitations

Chip unit volumes are estimated from revenue data rather than directly observed. Companies report revenue at the segment level, not by individual accelerator. We infer unit volumes by dividing segment revenue by estimated average selling prices and assumed product mix, which introduces uncertainty, particularly during product transition quarters when multiple generations are sold simultaneously.

Per-chip technical specifications — including die sizes, dies per wafer, logic yield rates, packages per CoWoS wafer, and packaging yields — are estimated from public chip specifications, analyst estimates, media reports, and our own estimations rather than disclosed by chip designers or TSMC. These specifications directly affect the conversion of unit volumes into wafer and HBM consumption, and errors here propagate into all share estimates.

The GPU-attributed share of NVIDIA and AMD inventory is estimated rather than reported. We proxy each company’s AI accelerator inventory using the GPU share of revenue as an attribution factor, which may not precisely reflect the actual composition of their balance sheets.

We assume what goes into each inventory bucket. Companies don’t disclose the composition of raw materials, WIP, or finished goods at this level of detail. We assume raw materials related to AI chips are primarily HBM, that advance purchase commitments to TSMC and memory suppliers sit in purchase obligations rather than on the balance sheet, and that fabricated logic dies once received from TSMC are classified as WIP. If the true accounting differs, our estimates of HBM and logic wafer consumption from inventory could be off.

We do not model inventory for Google and Amazon, both of which design chips for internal deployment rather than merchant sale. This likely leads to an understatement of their true supply chain consumption, particularly for HBM, where raw material inventory ahead of CoWoS packaging could be significant given the TPU v7 and Trainium ramps.

The 50/50 early/late-stage split applied to work-in-progress inventory is an assumption about the average stage of chips in the WIP pipeline, and the true composition is not observable from public filings.